Introduction

I am writing this article against the backdrop of the COVID-19 pandemic. Unsubstantiated claims on various media – news portals and social media platforms alike – are relentlessly making the rounds. How does one filter the onslaught of information? This article keeps the local situation in mind, but some of the points discussed are universal. Similarly, although it is motivated by this pandemic, many of the themes are applicable to a wider context. Let us have a look at some important things you should keep an eye out for.

I have heard / read a claim by an individual. How can I tell if it is worth taking seriously?

Here are some questions you should ask yourself in the first instance:

- Who is making the claim?

- Is it an officially recognised representative of the health authorities?

- If not, does the person have experience in epidemiology, mathematical modelling, or a closely related scientific discipline?

- Is it their personal opinion?

- Are they representing a company or lobby group?

I have read an article that reports on new results by scientists. Surely that is a reliable source of information?

There are two main points to keep in mind here. One concerns the media, while the other concerns scientific papers themselves. Let us take a look at both.

When it comes to the media, there are four main pitfalls to watch out for:

- Not all media sources are independent; some have their own agenda.

- Some media are simply after clicks to increase revenue. Therefore, they might write sensationalist articles to draw readers to their websites.

- It might be the case that a media platform has good intentions, but the reporter in question might not have adequate scientific background. As a result, an article describing scientists’ findings may be inaccurate.

- Sometimes, the title on its own can be misleading. This can be either intentional (to serve as click-bait), or unintentional (e.g. poor choice of words).

Scientific Publishing

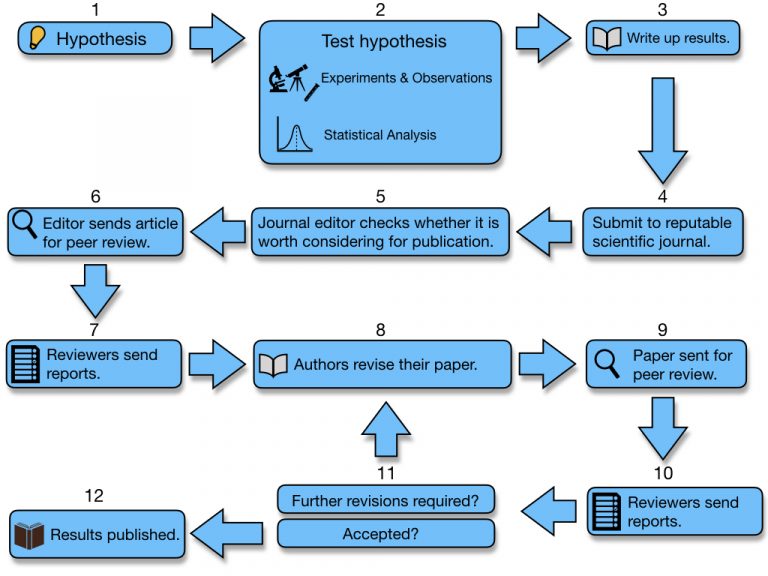

Consider the figure below which describes schematically the process of scientific publishing. A more detailed discussion follows underneath.

- Step 1: Scientists might come up with an idea. They have a hypothesis about how something might work.

- Step 2: They carry out experiments and observations to test that idea. They ensure the results are reliable.

- Step 3: When they are confident in their findings, they write up their results in a paper, i.e. a detailed article describing their idea, methodology, experiments, results, and conclusions.

- Step 4: They submit the paper to a reputable scientific journal. This is not a newspaper, or a science magazine. This is a specialised journal. There are many journals, each focussed on a specific topic. (A very small number accept papers in multiple fields.)

- Step 5: The journal editor (oftentimes there are many editors, each assigned to a specialised sub-field) takes a look at the paper to see whether it meets standards and fits the scope of the journal in question.

- Step 6: If the editor deems the paper worthy for consideration, it is forwarded to reviewers (also called referees). These are other scientists specialised in the same field who are able to scrutinise the paper. This is an anonymous process. The authors do not know who the reviewers are. (And sometimes, the reviewers do not know who the authors are either.)

- Step 7: The reviewers assess the paper, and each reviewer (usually a minimum of 2, oftentimes 3 or more) writes up a report in which they describe any revisions that are required. It is very rare that reviewers are happy with the first version. Almost always, there will be at least one stage of revision.

- Step 8: The authors receive a report detailing what they need to address. They might have to repeat some experiments, rewrite parts of their report, clarify text, or expand their discussion (to name but a few examples of what might be requested of them). The authors revise the paper accordingly, and write a report responding to each of the reviewers’ points.

- Step 9: The paper is sent back for peer review.

- Step 10: The reviewers (oftentimes the same reviewers, but sometimes they can be different ones), look at the paper again. They will send their new reports back to the editor, and these are forwarded to the authors.

- Step 11: If the reviewers are satisfied with the revised version, the paper is forwarded to the editor for final deliberation. (Most often, at this stage, if the reviewers agree that the paper should be published, then the editor will not hold the paper.) It is common for the reviewers to request more clarification. In this case, the paper is sent back to the authors. This process is repeated until the reviewers are happy with the paper.

- Step 12: The paper is sent to the journal’s proof-readers. The authors approve the proof-read version. Finally, the paper is published.

The publication of a paper is not the end of the process. Following publication, the rest of the scientific community will read and digest the findings. Other scientists will seek to replicate the results, i.e. check if they make the same findings if they repeat the same experiment(s) and observations. Sometimes, other groups of scientists will write papers that are a direct response to the published article, e.g. criticising aspects of the paper, reporting on conflicting results, or adding to the discussion. If the authors of the first paper themselves eventually find conflicting results (e.g. after carrying out further experiments and studies), they will want to report on this also. When a paper is good one, other scientists refer to it in their own work.

This is the process of scientific publishing in a nutshell. Is it a perfect system? No. But as you can see, it has in place a number of safeguards to maintain a high level of quality.

Now, you should keep in mind that during Step (4), i.e. when scientists send their results to journals, they oftentimes simultaneously post their draft to an online repository called a pre-print server. At the moment especially, many scientists working on COVID-19 are doing this (e.g. here and here) to expedite dissemination of their results so that other scientists can be made aware of potentially important findings as soon as possible. However, such pre-prints have not been peer-reviewed yet, and they might end up not being accepted for publication. In other words, while these pre-prints may be of significant help to other scientists working in the field (and that is the intention behind them), bear in mind that they are preliminary papers, and as a result, reports about them in the media can be confusing, especially if a short while later they end up being overturned by the results of a different study.

An organisation has issued a statement. How can one tell if its assertions are reliable?

Let us again consider the current pandemic situation as an example, since this is the most current topic. The two most reliable sources of information you can find are: the World Health Organisation (WHO), and the Local Health Authorities. If the organisation in question is neither of these two, then you should be very careful, and you should begin by asking yourself these questions:

- What kind of organisation is it?

- Is it a professional organisation?

- Is it a lobby group or association? (This might mean it has its own vested interests.)

- Has it presented a detailed analysis to back up its claims? Or is it making an assertion without providing any justification (or putting forth just a sketchy argument)?

- Of particular relevance to the topic of the pandemic, you might encounter forecasts about the situation. A statement might make claims about how many numbers to expect in the near future, or how serious or not the situation is. In this case, it is especially important that you check if the statement is accompanied by a detailed analysis (as opposed to being a mere statement).

In regard to the last point, those who might be of a more technical disposition might want to check if any of the below points have been addressed. It is highly unlikely that the layperson would go into this level of detail, but a journalist might. In any case, most would not be able to carry out a critical analysis of the below points, but watching out for whether they are discussed at all can inform about the credibility of an organisation’s assertion(s).

- Was a mathematical model applied? If so, is it specified which one?

- Is it an epidemiological model? If so, what kind? (There are multiple ones, e.g. deterministic compartmental models*, and stochastic.)

- Does the analysis talk about the limitations of the model?

- Does it discuss deficiencies in the data it is using?

- Does it discuss the sampling strategy that was used?

- Were changes in the sampling strategy considered?

- Is there a detailed analysis about error estimation?

- Are confidence intervals presented, and clearly explained?

- Is there any averaging going on?

- If so, what kind of average is being employed? Is it a moving average? What window-size? Why was that specific size chosen? Is there any discussion of how sensitive results are to a change in this number?

- Was the analysis carried out by experts in the field?

- Comparisons are drawn with other countries. The testing strategy is likely to be different. The sample selection is likely to be different. The number of people who have been tested will be different. Population size, demographics, and dynamics are different. In other words, such comparisons are to be approached with utmost caution.

- Statements that base entirely on the number of growing / decreasing cases. This might take the form of: “Last week we had less cases than this week, so the situation is now much worse”, or “This week we have less cases than last week, so the situation is getting better”. The number of cases on its own is meaningless. How many people have been tested? Did the sampling strategy change?

- Claims make use of hyperbole. If you see words like ‘disastrous’, ‘catastrophic’, ‘tragic’, ‘devastating’, or, at the other end of the spectrum, ‘fantastic’, ‘great’, ‘extraordinary’, ‘astonishing’, etc. thrown about in every other sentence, then you should be very careful. Most likely, it is the article in question that is both catastrophic and astonishing in equal measure. A detailed analysis will oftentimes result in a nuanced report. This is not to say that the situation at some point in time might not actually be very good or very bad. However, the more forceful a given claim is, the more detailed and convincing the presented evidence should be. At all times, keep this in mind: claims on their own are not worth much unless they are accompanied by valid argumentation and clear evidence. Simply arguing from authority (argumentum ad verecundiam) or appealing to the public (argumentum ad populum) are not admissible in science. You yourself might have noticed how the tone differs when a statement is made by public health officials (reasoned, calm, and collected) as opposed to other individuals or entities where, as it has been put, evidence-based reasoning is replaced by eminence-based reasoning.

How does a mathematical model work?

In the below video (in Maltese), I describe one of the epidemiological compartmental models (SEIR). This video is only presented for educational purposes. Learning how the discussed model works does not mean you should try to predict future trends yourself.